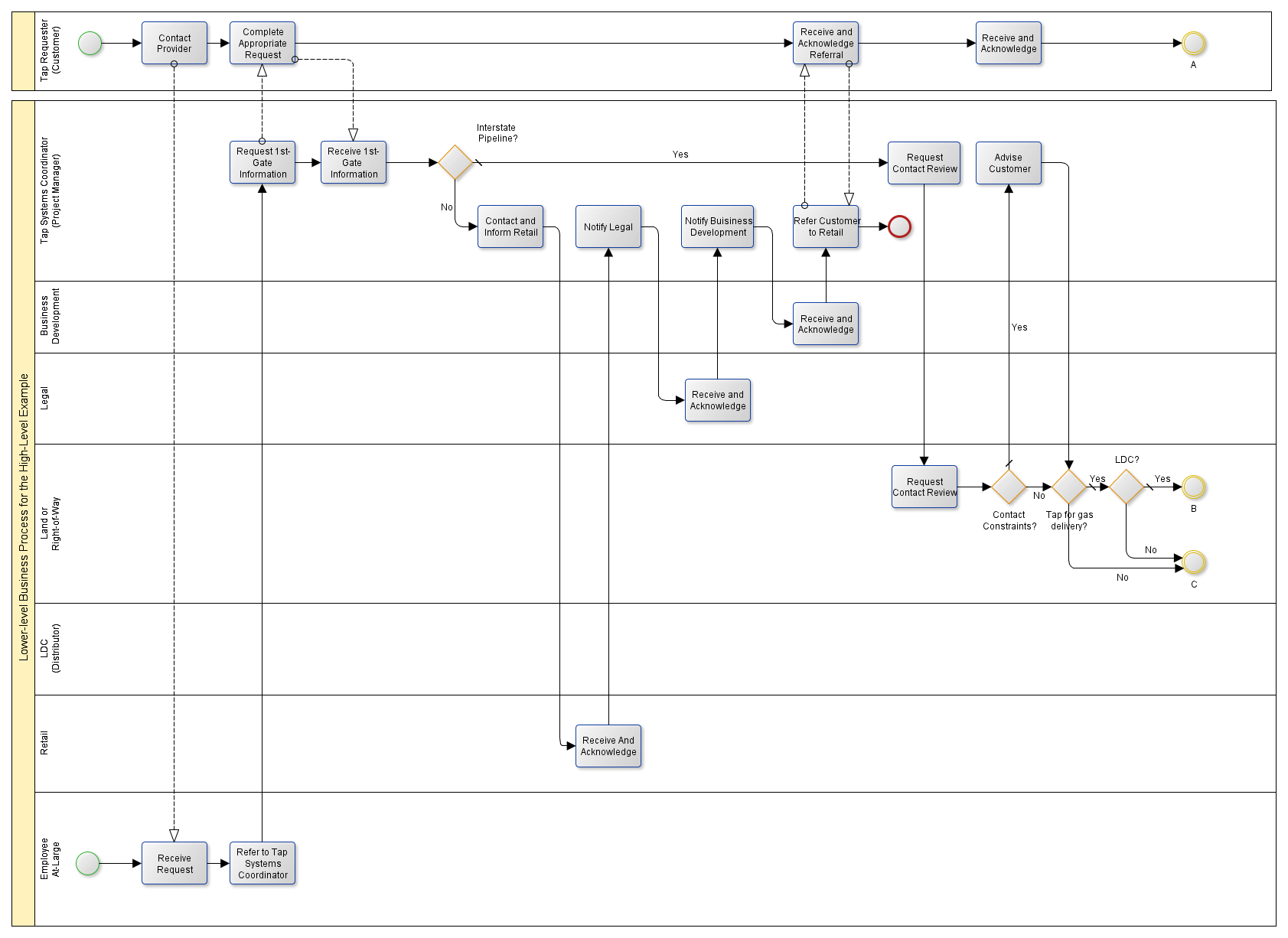

Figure 1 shows the dependency syntax analysis result of the sentence “During a meeting, the team owner told Jordan that his services were no longer necessary tomorrow”.

Dependency parsing outputs a directed graph G = (N, E), where N represents a node and E represents a directed edge, corresponding to the words in the sentence and the directed edge in the graph, respectively. For this, the fusion of dependent syntactic information for event detection has become one of the current mainstream methods. To obtain the best results, the model must understand not only the semantics of a sentence, but also the structural information in a sentence. A series of experiments conducted on the ACE2005 corpus demonstrates that the proposed method enhances the performance of the event detection model.Įvent detection is different from other information extraction tasks. Finally, a gating mechanism combined the semantic and structural dependency information of the sentence, enabling us to accomplish the event detection task. Second, we implemented an enhanced graph convolution network using the multi-head attention mechanism to understand the representation of nodes in the graph. First, we statistically analyzed the ACE2005 corpus to prune the dependency parsing tree, and combined the named entity features in the sentence to generate an undirected graph. To solve these problems, we developed an event detection model that uses a self-constructed dependency and graph convolution network. These edges do not all provide guidance for the event detection model, and the accuracy of dependency parsing tools decreases with the increase in sentence length, resulting in error propagation.

However, for some long sentences with more words, the results of dependency parsing are more complex, because each word corresponds to a directed edge with a dependency parsing label. The extant event detection models, which rely on dependency parsing, have exhibited commendable efficacy.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed